A Practitioner’s Treatise on the Doctrine, the Cases, and the Foundation That Holds

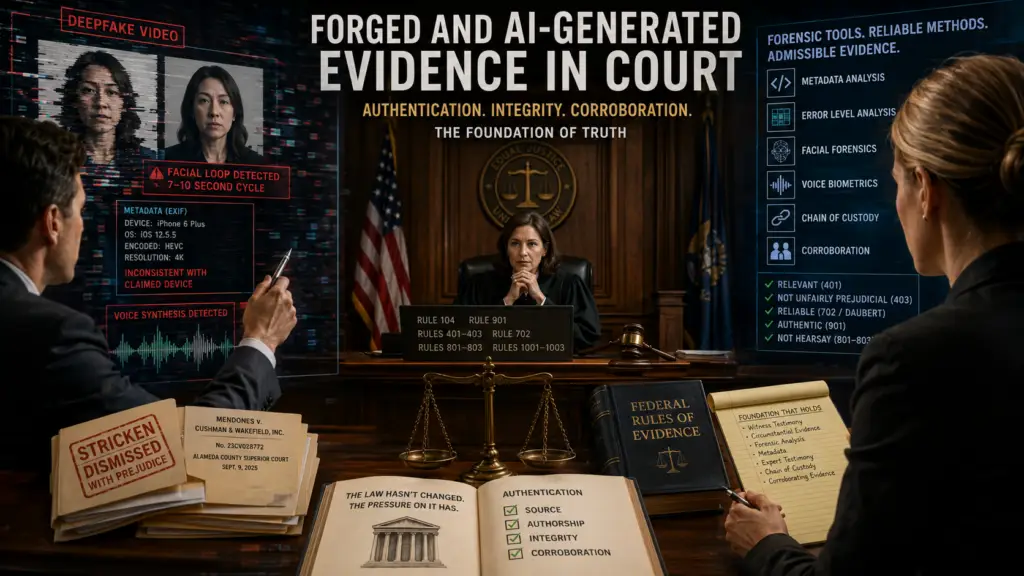

Judge Victoria Kolakowski sat in her Alameda County courtroom in September 2025, watching a video the plaintiffs had submitted to defeat summary judgment. Something was off. The witness in the recording spoke in a flat monotone. Her facial expressions twitched in a seven-to-ten-second loop. The metadata claimed an iPhone 6 Plus running iOS 12.5.5, but the visual processing required Apple Intelligence, a feature that ships only on iPhone 15 Pro and later. The judge issued an order to show cause. At the hearing, plaintiff Maridol Mendones admitted some of the witnesses depicted were deceased, others uncontactable, and that generative AI had produced the footage. The court struck the operative complaint and dismissed with prejudice. Mendones v. Cushman & Wakefield, Inc., No. 23CV028772 (Cal. Super. Ct. Alameda Cnty. Sept. 9, 2025), reconsideration denied (Nov. 6, 2025).

That ruling is the first published civil decision in which a sitting trial judge personally caught and sanctioned deepfake exhibits in the record. It will not be the last. Federal rule drafters have studied the problem for two years and circulated draft language. Louisiana enacted the first state statute on AI-generated evidence. Scholars have published burden-shifting frameworks. None of it has produced a finished doctrine. The governing law, as of May 2026, remains the ordinary rules of evidence: Federal Rules 104, 401–403, 702, 801–803, 901, 902, and 1001–1003. The architecture has not changed. The pressure on it has.

This article synthesizes the case law, the pending rule amendments, the forensic methods that hold up under cross, and the litigation practices that win. It is written for the practitioner who needs to lay or break a foundation under hostile examination. It treats deepfakes, AI-enhanced video, fabricated screenshots, and old-fashioned text-message forgery as a single continuum, because that is how the cases treat them. Source, authorship, integrity, and corroboration are the recurring foundation issues, regardless of whether the manipulation is generative AI or a phone in the wrong hands.

I. The Doctrinal Frame

The federal architecture for digital evidence has not been redesigned. Rule 104(a) puts threshold admissibility questions in the judge’s hands. Fed. R. Evid. 104(a). Rule 901(a) requires the proponent to produce evidence “sufficient to support a finding that the item is what the proponent claims it is.” Fed. R. Evid. 901(a). Rule 902 supplies limited self-authentication routes, of which subsections (13) and (14) matter most for digital evidence. Fed. R. Evid. 902(13)–(14). Rules 401–403 control relevance and unfair prejudice. Rule 702 governs expert reliability. Daubert v. Merrell Dow Pharm., Inc., 509 U.S. 579 (1993). Rules 801–803 address hearsay inside text threads, voicemails, and chat logs. Rules 1001–1003 govern originals, duplicates, screenshots, and exports.

The architecture is not the problem. The pressure on Rule 901’s “sufficient to support a finding” standard is.

Authentication has long been a low bar. Lorraine v. Markel Am. Ins. Co., 241 F.R.D. 534, 542 (D. Md. 2007) (Grimm, M.J.), the foundational treatise on electronic evidence, treats authentication as a threshold the proponent clears with modest proof. The Lorraine court warned that any serious consideration of the requirement to authenticate electronic evidence must account for tools that can fabricate or alter content. Id. at 543. The warning was prescient. The bar that worked for a 2007 e-mail does not work for a 2025 voice clone.

The recurring confusion in litigation is the conflation of authenticity with hearsay. The Third Circuit drew the line cleanly in United States v. Browne, 834 F.3d 403, 415 (3d Cir. 2016), where the government tried to self-authenticate Facebook chats through the platform’s records custodian. The court rejected that move. Facebook’s certification proved the platform stored the records. It did not prove who typed the messages inside. The court still admitted the chats, but only because the government produced extrinsic evidence linking Browne to the account and the conversations. Id. at 412–14. The lesson generalizes. Provider records prove the platform has the data. Authorship requires more.

State courts apply the same basic test under different labels. Maryland’s Mooney v. State, No. 32, Sept. Term 2023 (Md. 2024), confirms that video may be authenticated through witness testimony plus circumstantial evidence under a reasonable-juror preponderance threshold. Texas in Tienda v. State, 358 S.W.3d 633, 639 (Tex. Crim. App. 2012), and Butler v. State, 459 S.W.3d 595, 601 (Tex. Crim. App. 2015), accepts circumstantial authentication when distinctive characteristics tie the content to the alleged author. Pennsylvania’s Light (Kipp) v. Esbenshade, No. 2009-20401 (Pa. C.P. Lebanon Cnty. 2013), takes a stricter view in family litigation, demanding corroboration beyond a phone number alone.

Two early cases still earn citation today. In Griffin v. State, 419 Md. 343, 19 A.3d 415 (2011), the prosecution offered MySpace pages with weak attribution to the defendant’s girlfriend. The Maryland high court reversed the trial court’s admission as an abuse of discretion. And in United States v. Vayner, 769 F.3d 125, 132 (2d Cir. 2014), the Second Circuit reversed a conviction because the government showed only that the social-network page identified the defendant, not that the defendant created or controlled it. Both cases now do most of the doctrinal work in deepfake disputes, because the same authentication question recurs unchanged: did this person actually generate this content?

II. Rule Changes Underway

Federal rule drafters have not been idle. Two proposals matter.

Proposed Rule 707 addresses machine-generated evidence. As published August 15, 2025, the text reads: “When machine-generated evidence is offered without an expert witness and would be subject to Rule 702 if testified to by a witness, the court may admit the evidence only if it satisfies the requirements of Rule 702(a)–(d). This rule does not apply to the output of basic scientific instruments.” Proposed Fed. R. Evid. 707 (published for public comment Aug. 15, 2025). The Committee Note explains that when a machine draws inferences and makes predictions, there are reliability concerns akin to those for expert witnesses. Id., Committee Note. The DOJ cast the sole dissenting vote, 8–1, against publication for comment. Public comment closed February 16, 2026. If approved through the full Rules Enabling Act process, the earliest effective date is December 1, 2027.

Proposed Rule 901(c) addresses authentication of potentially fabricated AI evidence. The current Advisory Committee draft, from the June 10, 2025 Standing Committee Agenda Book, reads in full:

(c) Potentially Fabricated Evidence Created by Artificial Intelligence.

(1) Showing Required Before an Inquiry into Fabrication. A party challenging the authenticity of an item of evidence on the ground that it has been fabricated, in whole or in part, by generative artificial intelligence must present evidence sufficient to support a finding of such fabrication to warrant an inquiry by the court.

(2) Showing Required by the Proponent. If the opponent meets the requirement of (1), the item of evidence will be admissible only if the proponent demonstrates to the court that it is more likely than not authentic.

(3) Applicability. This rule applies to items offered under either Rule 901 or 902.

Proposed Fed. R. Evid. 901(c) (June 10, 2025 Standing Comm. Agenda Book at 89). The Advisory Committee declined to publish the draft for public comment in May 2025 and held it in the bullpen through the November 2025 Agenda Book. The most consequential feature is the burden shift. Once the opponent makes a prima facie showing of AI fabrication, the proponent must prove the exhibit is more likely than not authentic. That structure rewards proponents who can produce hash values, native files, and chain of custody. It punishes those who deliver only screenshots.

State law is moving faster than the federal process. Louisiana Act 250 (HB 178), signed June 11, 2025, effective August 1, 2025, amended La. Code Civ. Proc. art. 371 to require attorneys to exercise “reasonable diligence” in verifying authenticity, with attorney sanctions for violation. Act 250 also amended art. 1551 to give courts case-management authority over AI-generated exhibits. La. Code Civ. Proc. arts. 371, 1551 (2025). California SB 970 directs the Judicial Council, by January 1, 2026, to develop court rules for AI-generated evidence claims. Cal. SB 970 (2024). New Jersey created criminal penalties of up to five years and $30,000 for distributing deceptive AI content, effective April 2025. Tennessee’s ELVIS Act, effective July 1, 2024, was the first state right-of-publicity statute extending property protection to a person’s voice, with treble damages for knowing violation. Tenn. Code Ann. § 47-25-1101 et seq. (2024).

Federal regulation has been narrower. The TAKE IT DOWN Act, signed May 19, 2025, criminalizes nonconsensual intimate-image deepfakes and requires platforms to establish notice-and-removal procedures by May 19, 2026. 15 U.S.C. § 6851 (2025). Substantive deepfake regulation tied to elections has fared worse. California AB 2655 and AB 2839 were struck down in Kohls v. Bonta, No. 2:24-cv-02527 (E.D. Cal. Aug. 6, 2025; reaffirmed Nov. 21, 2025), on Section 230 preemption and First Amendment grounds. The procedural rules survive these challenges. The substantive prohibitions do not. That distinction will matter as more states draft.

III. The Cases That Did the Work

The case law clusters into four buckets: AI-altered or AI-generated exhibits excluded or sanctioned; the deepfake defense (sometimes called the liar’s dividend) raised and rejected; old-fashioned forgery brought into court; and AI-hallucinated citations sanctioned under Rule 11 and inherent power.

A. AI-Altered and AI-Generated Exhibits

Mendones anchors the civil side. The court found multiple video testimonials were deepfakes, found the metadata not reliable or credible, found photographs altered, and found chat exhibits likely AI-generated. Mendones, No. 23CV028772, slip op. at 8–11. The court imposed terminating sanctions under California Code of Civil Procedure § 128.7(b) and dismissed with prejudice. Lesser sanctions were insufficient because the deliberate use of deepfakes “fundamentally undermines the integrity of judicial proceedings.” Id. at 14.

State v. Puloka, No. 21-1-04851-2 KNT (Wash. Super. Ct. King Cnty. Mar. 29, 2024), is the criminal analogue. The defense in a triple-homicide trial sought to admit a 10-second iPhone/Snapchat video “enhanced” by an expert using Topaz Video AI plus Adobe processing. The State’s expert, Grant Fredericks, a 30-year forensic video analyst and FBI instructor, testified that the AI tool added 16 times the original pixel count, that the Scientific Working Group on Digital Evidence had warned against AI enhancement in forensic contexts, and that the FBI had no best-practices accommodation for it. The court excluded the AI-enhanced video under Washington’s Frye standard, holding that Topaz Video AI “ha[s] not been peer-reviewed by the forensic video analysis community, [is] not reproducible by that community, and [is] not accepted generally in that community.” Puloka, slip op. at 7. This is the first published U.S. ruling on AI-enhanced video in a criminal trial. Topaz Labs itself “strongly recommends against” forensic use of its product. Statement of Topaz Labs to NBC News (Apr. 2, 2024).

Matter of Weber, 2024 N.Y. Slip Op. 24258, 85 Misc. 3d 727 (Sur. Ct. Saratoga Cnty. 2024) (Schopf, S.), is the gatekeeping case. The objectant’s expert in a trustee accounting admitted using Microsoft Copilot to cross-check damages calculations but could not state his prompts, his data sources, or his methodology. The court ran the same Copilot prompt on three different computers and got three different answers. Weber, 85 Misc. 3d at 731. The court held that, given the rapid evolution of AI and its inherent reliability issues, counsel has “an affirmative duty to disclose the use of artificial intelligence and the evidence sought to be admitted should properly be subject to a Frye hearing prior to its admission.” Id. at 736. Weber is the first published decision to announce an affirmative AI-disclosure duty.

Kohls v. Ellison, 2025 WL 66514 (D. Minn. Jan. 10, 2025), is the irony case. Minnesota defended its election-deepfake statute against a First Amendment challenge by relying on an expert declaration from Stanford Professor Jeff Hancock. Hancock drafted the declaration with GPT-4o. It cited two academic articles that did not exist. The court struck the declaration: “signing a declaration under penalty of perjury is not a mere formality,” and unchecked AI use “shatters [the expert’s] credibility.” Id. at *3. An AI misinformation expert’s declaration was tainted by AI hallucinations. The court used the moment to issue a broader warning to the bar.

B. The Deepfake Defense

Huang v. Tesla, Inc., No. 19CV346663 (Cal. Super. Ct. Santa Clara Cnty. Apr. 2023), is the canonical rejection of the liar’s dividend. Tesla, defending a wrongful-death claim, argued that recorded statements by Elon Musk about Autopilot might be deepfakes and could not be authenticated. Judge Evette D. Pennypacker rejected the argument. Allowing it would mean public figures could “simply say whatever they like in the public domain, then hide behind the potential for their recorded statements being a deep fake to avoid taking ownership of what they did actually say and do.” Huang, slip op. at 3. The court ordered a three-hour deposition limited to whether Musk was the speaker.

The January 6 cases reached the same result. United States v. Doolin, No. 21-cr-00447 (D.D.C. 2022), and United States v. Reffitt, No. 21-cr-00032 (D.D.C. 2022), each involved defense challenges to open-source video evidence as potentially deepfaked. Both courts treated the challenge as going to weight, not admissibility. Circumstantial authentication under Rule 901(b)(4) was satisfied. Doolin received 18 months. The pattern: courts will not accept “this might be a deepfake” as a stand-alone basis for exclusion when the proponent has independent corroboration. The challenger needs evidence of fabrication, not the bare possibility of it.

C. Forged Digital Evidence

Rossbach v. Montefiore Med. Ctr., 81 F.4th 124 (2d Cir. 2023), is the leading emoji-forgery case. The Title VII plaintiff produced a JPEG of an iPhone screen showing sexually suggestive texts she claimed her supervisor sent. Forensic expert Daniel Regard at iDiscovery Solutions determined the photo was a forgery. The emoji visible in the screenshot did not exist on iOS 9.3.5, the highest iOS version her cracked iPhone 5 could run. Id. at 130. The Southern District dismissed with prejudice. The Second Circuit affirmed. Rossbach set the template: when the device cannot have produced the artifact shown in the screenshot, the screenshot is fake.

Gunter v. Alutiiq Advanced Sec. Sols., LLC, 2022 WL 1139875 (D. Md. Apr. 18, 2022), follows the same pattern with text-message printouts. Same-day forensic exam of plaintiff’s phone produced six previously-unproduced authentic messages and confirmed the printouts were fraudulent. Sanctions issued under Rules 41(b), 37(e), and 26(g), and the court’s inherent power.

The Pikesville High School case is the leading real-world AI voice-clone matter. Athletic director Dazhon Darien, facing termination, generated AI-cloned audio of Principal Eric Eiswert appearing to make racist and antisemitic remarks, and disseminated the recording. Two forensic analysts plus a Google subpoena traced the recording back to Darien’s email and recovery phone. He was charged with disrupting school operations, theft, retaliation against a witness, and stalking. Eiswert filed civil suit January 2025. State of Maryland v. Darien, No. 04-K-24 (Balt. Cnty. Cir. Ct. 2024).

The Choi prosecutor case shows what malice with a phone looks like. People v. Yujin Choi, No. 24PDJ019 (Colo. OPDJ Dec. 31, 2024). A Denver D.A.’s Office prosecutor manufactured four text messages on her own phone, changed her contact label so they appeared to come from criminal investigator Dan Hines, and altered her Verizon Wireless message log to support a false sexual-harassment complaint. The forgery surfaced because the first message bore a timestamp 40 minutes after she had already reported the alleged harassment; Hines’s phone forensics showed no communications with Choi’s number; and Verizon’s subpoenaed logs contradicted her. The weekend before her devices were to be examined, Choi claimed both her iPhone and laptop had been water-damaged. The disciplinary tribunal found that not plausible. She was disbarred.

The NGH Group voice-clone custody case adds another data point. In a contested divorce-and-custody battle, the mother submitted two audio recordings purporting to capture the father making threatening statements. NGH Group’s forensic analysis identified that both files had been recorded on an iPhone and that their metadata showed creation dates ten days after the mother had subscribed to ElevenLabs’ paid voice-cloning service. The court ordered seizure and examination of her devices. She confessed.

D. AI Hallucinations as Sanctionable Conduct

The Mata v. Avianca line is now a doctrine, not a punchline. Mata v. Avianca, Inc., 678 F. Supp. 3d 443 (S.D.N.Y. 2023) ($5,000 sanction). Park v. Kim, 91 F.4th 610 (2d Cir. 2024) (grievance-panel referral). Wadsworth v. Walmart Inc., No. 2:23-cv-00118 (D. Wyo. Feb. 24, 2025) (Morgan & Morgan attorney Rudwin Ayala fined $3,000 and pro hac vice revoked; supervising counsel and local counsel fined $1,000 each). The Wadsworth citations had been generated by Morgan & Morgan’s in-house “MX2.law” AI platform, not ChatGPT. The internal tool did not save the firm. Coomer v. Lindell, No. 22-cv-01129-NYW-SBP (D. Colo. July 7, 2025) (defense counsel sanctioned $3,000 each for an opposition brief containing nearly thirty defective citations; counsel sanctioned again in 2026 for repeated mis-citation). Johnson v. Dunn, No. 2:21-cv-1701 (N.D. Ala. July 23, 2025) (court declared monetary sanctions ineffective; instead disqualified the offending attorneys, required publication in the Federal Supplement, and gave notice to bar regulators). People v. Mostafavi (Cal. 2d Dist. Ct. App. Sept. 2025) ($10,000 sanction, the largest yet imposed by a California state court; 21 of 23 quotations in an appellate brief were ChatGPT-fabricated).

Damien Charlotin’s tracker logged 87 cases as of May 18, 2025; 486 by October 28, 2025; and 1,398 worldwide cases (including 915 U.S. cases) by April 2026. The growth curve is steeper than the bar’s adjustment to it. ABA Formal Opinion 512 (July 29, 2024) clarified that competence, confidentiality, candor, and supervision duties all apply when an attorney uses generative AI. Knowing the rule does not insulate counsel who skip the verification step.

IV. What Forensics Can and Cannot Do

The forensic question splits cleanly. Provenance is where authentication is won. Detection is where most lawyers think it is won, and they are wrong.

Provenance is the documented, verifiable history of a file from creation to court. Native device extraction, cloud or provider records, hash values, chain of custody, and (where present) cryptographic provenance manifests like C2PA Content Credentials are provenance methods. They tell the court where the file came from, what happened to it, and that the bytes have not changed. NIST SP 800-101 Rev. 1, Guidelines on Mobile Device Forensics (2014); NIST, Hashing Functions for File Integrity (2024); NIJ, Digital Evidence Policies and Procedures Manual (2018). Provenance does not prove who created the underlying event. It proves the file is what the proponent says it is.

Detection works in the opposite direction. It tries to determine whether content has been altered or AI-generated by analyzing the content itself. Pixel and compression analysis, Error Level Analysis (ELA), audio waveform analysis, electrical network frequency (ENF) analysis, deepfake classifiers, and AI-detector models all live here. Each has a role. None should anchor an authentication ruling on its own.

The detection literature is unkind. A 2025 systematic review and meta-analysis covering 56 papers and over 86,000 participants found human deepfake-detection accuracy in the low- to mid-50% range, with confidence intervals often crossing chance. Diel et al., Human Performance in Detecting Deepfakes, iScience (2025). Sarah Barrington and colleagues found that listeners correctly identified a voice as AI-generated only about 60% of the time, and when listeners heard a real person paired with that person’s AI clone, they judged them to be the same speaker roughly 80% of the time. Barrington et al., People Are Poorly Equipped to Detect AI-Powered Voice Clones, Sci. Reps. (Nature) (Mar. 2025). Automated systems do better in lab conditions and degrade fast in the wild. The Deepfake-Eval-2024 benchmark found AUC decreasing by 50% for video, 48% for audio, and 45% for image models between curated datasets and in-the-wild material. Chandra et al., Deepfake-Eval-2024, arXiv:2503.02857 (Mar. 2025). Richings et al. at the Alan Turing Institute reported a recall drop of over 30% when models were evaluated on deepfakes created with generation techniques from just six months later. Richings et al., arXiv:2511.07009 (Nov. 2025).

The operational consequence: a foundation that depends on a deepfake detector saying “fake” or “real” will not survive a Daubert challenge in 2026. Detection is screening. Provenance is proof.

The most powerful provenance tool is the cryptographic hash. A SHA-256 hash is a 256-bit digital fingerprint of a file. Two files with the same SHA-256 hash are bit-identical with overwhelming probability. Two files that differ by a single byte produce entirely different hashes. NIST FIPS PUB 180-4 (2015). Rule 902(14) explicitly contemplates hash-based authentication of digital files. Fed. R. Evid. 902(14) (allowing self-authentication of “data copied from an electronic device, storage medium, or file” by certification of a qualified person describing the copying process and verifying integrity, including by hash comparison). When a forensic examiner certifies that the exhibit’s hash matches the hash recorded at original collection, the proponent has eliminated one entire class of fight: the bytes are unchanged. Authorship and truth remain open questions. Tampering does not.

Provenance frameworks beyond hashes are advancing. The Coalition for Content Provenance and Authenticity (C2PA) specification, supported by Adobe, Microsoft, Sony, Canon, Leica, Nikon, OpenAI, Google, Meta, BBC, Truepic, Intel, and Amazon, embeds cryptographically signed manifests describing a file’s creation and edit history. C2PA Specification v2.4 (2025). Hardware-signed manifests at the moment of capture are now available in supported cameras. One limitation. C2PA does not validate truth. It validates the chain of edits. Authenticated forgeries using a valid C2PA manifest have been demonstrated by Hacker Factor and at C2PA webinars. The standard is a future-state defensive layer, not a current-state silver bullet.

V. The Proponent’s Foundation

A foundation that holds answers six questions: What is this? Where did it come from? Who created or sent it? Has it changed? How do we know? Why is this version fair and complete?

Each answer should be supported by more than one source. The cumulative-foundation principle is everywhere in the case law. Mooney allowed video authentication on witness testimony plus circumstantial evidence. Butler allowed text authentication on phone-number recognition plus context plus a same-exchange call. Browne required Facebook content authentication on extrinsic evidence linking the defendant to the account, the messages, and the surrounding circumstances. The proponent who relies on a single screenshot and a single witness’s recognition is asking the court to do work the proponent should have done in discovery.

The model foundation language tracks NIST SP 800-101 Rev. 1 and the federal rules:

Your Honor, the proponent will establish that Exhibit 12 is the native export of the message thread at issue; that it was collected from the witness’s phone and cloud account without alteration; that the collecting examiner preserved the source device, documented chain of custody, and verified the exported files by SHA-256 hash; that the witness recognizes the number and the account, the surrounding conversation, and the distinctive content of the exchange; and that the exhibit fairly and accurately reflects the full thread, including timestamps and participants, as it appeared on the device.

That language combines Rule 901(b)(1) (witness with knowledge), Rule 901(b)(4) (distinctive characteristics), Rule 901(b)(9) (process or system), and Rule 902(14) (hash authentication) into one continuous foundation. The witness covers context. The examiner covers integrity. The hash covers byte-identity. The cumulative effect is evidence sufficient to support a finding of authenticity, and a foundation that survives Rule 403 balancing because the proponent has done the verification work the rule presumes.

Direct examination should hit, in order:

- Did you create, receive, or record this exhibit?

- On what device, platform, or account?

- Is this the original or native file? If not, what is it?

- Who had access to the device or account?

- What steps were taken to preserve it?

- Was a hash value calculated, and if so, by whom?

- How do you recognize the sender, speaker, or person shown?

- What contextual details tie the exhibit to the event?

- Is the exhibit complete, or are there omissions, crops, or edits?

- Was any enhancement performed, and if so, by whom and using what method?

Each question should be answered before the exhibit is offered. Several of the failed-foundation cases failed because the witness was put on the stand without preparation for these questions. Vayner and Griffin both involved proponents who had answers but did not develop them.

VI. The Opponent’s Challenge

The opponent’s burden is lower than the proponent’s. The opponent does not have to prove the exhibit is fake. The opponent has to show enough to require the proponent to do real authentication work. Proposed Rule 901(c) codifies that allocation, and it is already operating under existing Rule 104(a) practice.

The opening move is procedural. File a motion in limine before trial. Request a Rule 104(a) authentication hearing. Demand the native file, the source device, the cloud export, and the provider records. Issue a subpoena to the carrier or platform. The cases that produced exclusion (Puloka, Weber, Mendones) all involved early opponent action.

The cross-examination playbook tracks each provenance gap:

- You never examined the original device, correct?

- You cannot tell the Court who had access to the account or phone, correct?

- You relied on screenshots, not a native export with metadata, correct?

- You did not compute or compare hash values, correct?

- You cannot identify the software or algorithm used to enhance this file, can you?

- There are gaps in the conversation thread, correct?

- The provider certification shows Verizon retention, but not who typed the message, correct?

- Your detector has no established real-world error rate for this generator or post-processed media, correct?

- The metadata you received was produced manually by the party, not extracted through a validated forensic workflow, right?

When AI enhancement is offered as substantive evidence, file a Daubert or Frye motion. Puloka is the precedent. AI enhancement that has not been peer-reviewed by the forensic video community does not meet Rule 702’s reliability threshold. Topaz, Adobe Generative Fill, and similar tools were built for content creation, not forensic analysis. The vendors say so themselves.

Rule 403 closes the gap. Even when authentication clears the threshold, vivid digital exhibits with weak provenance can be excluded as substantially more prejudicial than probative. The continued-influence effect matters here: jurors retain visual content even after instructions to disregard. NCSC, AI-Generated Evidence: A Threat to Public Trust in the Courts (2025). Counsel arguing Rule 403 should not just cite the rule. Counsel should explain the specific cognitive risk, supported by the social-science literature.

VII. The Sanctions Frontier

Mendones is the high-water mark. Terminating sanctions plus dismissal with prejudice. Rossbach was second. Dismissal with prejudice affirmed by the Second Circuit. Choi produced disbarment. In re David-Vega (Ga. 2024) produced disbarment for a fabricated client-termination email; the Georgia Supreme Court called it among “the most serious violations under the Rules.”

The pattern: when courts find deliberate fabrication, they do not impose modest monetary sanctions. They take the case, the law license, or both. Counsel who fail to verify their own client’s evidence are exposed under inherent power, Rule 11, Rule 26(g), Rule 37, and the bar’s professional-conduct rules. ABA Formal Opinion 512 makes the duty explicit. Louisiana Act 250 codifies it for one state. Others will follow.

The single most consequential change at the firm level is engagement-letter language. Clients should be required, in writing, to: (1) preserve original digital files (no screenshots), (2) provide device access for forensic capture if needed, and (3) disclose any AI tool used to generate, enhance, or annotate any item likely to be offered as evidence. The Louisiana standard is the floor. It will become the national baseline once Rule 707 or Rule 901(c) is adopted, whichever comes first.

VIII. Practice Changes Monday Morning

Five things every litigation practice should have in place by Monday.

First, an updated engagement letter and intake form imposing the disclosure and preservation duties just described. Stop collecting evidence from clients in screenshot form. Require native files. The conversation is shorter than counsel fear. Most clients comply once the reason is explained.

Second, a standing relationship with one digital-forensics expert who can handle image, video, and audio. Budget $3,000–$10,000 for at least one forensic engagement per significant matter where digital evidence is contested. The cost is far below the cost of losing a case to a deepfake or being sanctioned for one. Names that recur in the case law: iDiscovery Solutions, NGH Group, Forensic Photonics, Right Forensics, Primeau Forensics, Lucid Truth Technologies.

Third, a Rule 26(f) ESI protocol that mandates AI disclosure, native file production, and early exhibit-list exchange. The protocol should explicitly prohibit screenshots of critical evidence. The model language is already circulating in Quinn Emanuel and Federal Judicial Center training materials. Adopt it now rather than after a sanctions order.

Fourth, mandatory training on (a) laying foundation for an iPhone-captured video (witness identification, device, chain, hash), (b) challenging the foundation of a digital exhibit, (c) making a Daubert or Frye record on AI-enhanced evidence, and (d) spotting the markers in Rossbach (emoji or iOS mismatch), Mendones (metadata versus visible features), and the NGH Group case (creation date versus claimed-event date). The patterns are consistent enough to teach in a half-day session.

Fifth, when the stakes warrant it, capture on a C2PA-enabled device and preserve the SHA-256 hash at the moment of collection. Maintain a clean chain-of-custody log naming each custodian, date, and device. Use forensically sound tools when imaging: FTK Imager, X-Ways, Cellebrite for devices; X1 Social Discovery, Page Vault, or Hunchly for online content. The proponent who arrives at trial with hash, chain, and provenance has eliminated the largest authenticity fight before it begins.

Coda

The doctrinal architecture has not changed. The pressure on it has, and the cases now coming out under existing rules show what that pressure looks like at the margins: dismissed complaints, disbarred lawyers, excluded video, sanctioned counsel. None of those rulings required Rule 707 or Rule 901(c). Each was within the reach of Rule 104, Rule 901, Rule 702, and Rule 403 as they stood when Lorraine v. Markel was decided in 2007. The rules were always enough. What changed is how much work the proponent has to do to clear them.

The practitioner who treats authentication as a checkbox loses. The practitioner who treats it as a sustained, evidence-dense exercise in provenance wins. That has been true since the Federal Rules of Evidence were enacted. AI made it visible.